Precision Engineering in the Age of AI Autonomy: The IWV Standard

A Deep Dive into the NotesXML Development Process

IWV Digital Solutions LLC

By IWV Digital Solutions — April 2026 (Revised)

1. Introduction

NotesXML is a cross-platform note-taking application built by IWV Digital Solutions LLC. It runs on Android, Windows, Linux, and Web browsers from a shared codebase. It supports 13 note types, local AI inference with downloadable language models, an XML-based portable file format (NXL), and a 100% ad-free commercial model. It is built on a local-first, privacy-centric architecture called the Zero-Exposure Protocol: user data never touches an IWV server.

What makes this project distinctive is not the product alone, but the process used to build it. NotesXML is developed through a tightly integrated partnership between a human developer-owner and an AI development assistant (Claude, by Anthropic), governed by a formal set of evolving standards, audit specifications, and documentation requirements. Every defect discovered during testing doesn't just result in a code fix — it can trigger updates to the development standards themselves, to the code audit criteria, and to the project's governing specifications.

This article describes that process in detail, including the feedback loops that make it self-improving.

2. The Development Ecosystem

The NotesXML development ecosystem comprises three interacting systems: the human-AI development partnership, a body of governing documents, and a set of formal feedback loops that connect discovered issues back to process improvements.

The human owner sets direction, reviews work, performs real-device testing, and makes architectural decisions. The AI assistant implements code changes, produces documentation, performs code audits, and follows a comprehensive rule set that has been refined through dozens of real incidents. The governing documents — the SRS, ICD, LLM Development Standards, Code Audit Specification, Codebase Cross-Reference, and Documentation Management Plan — serve as the shared contract between both parties.

The result is a closed-loop system where the process outputs are not limited to code modules and build artifacts. The process also produces updated versions of its own governing documents, creating a continuously improving development methodology.

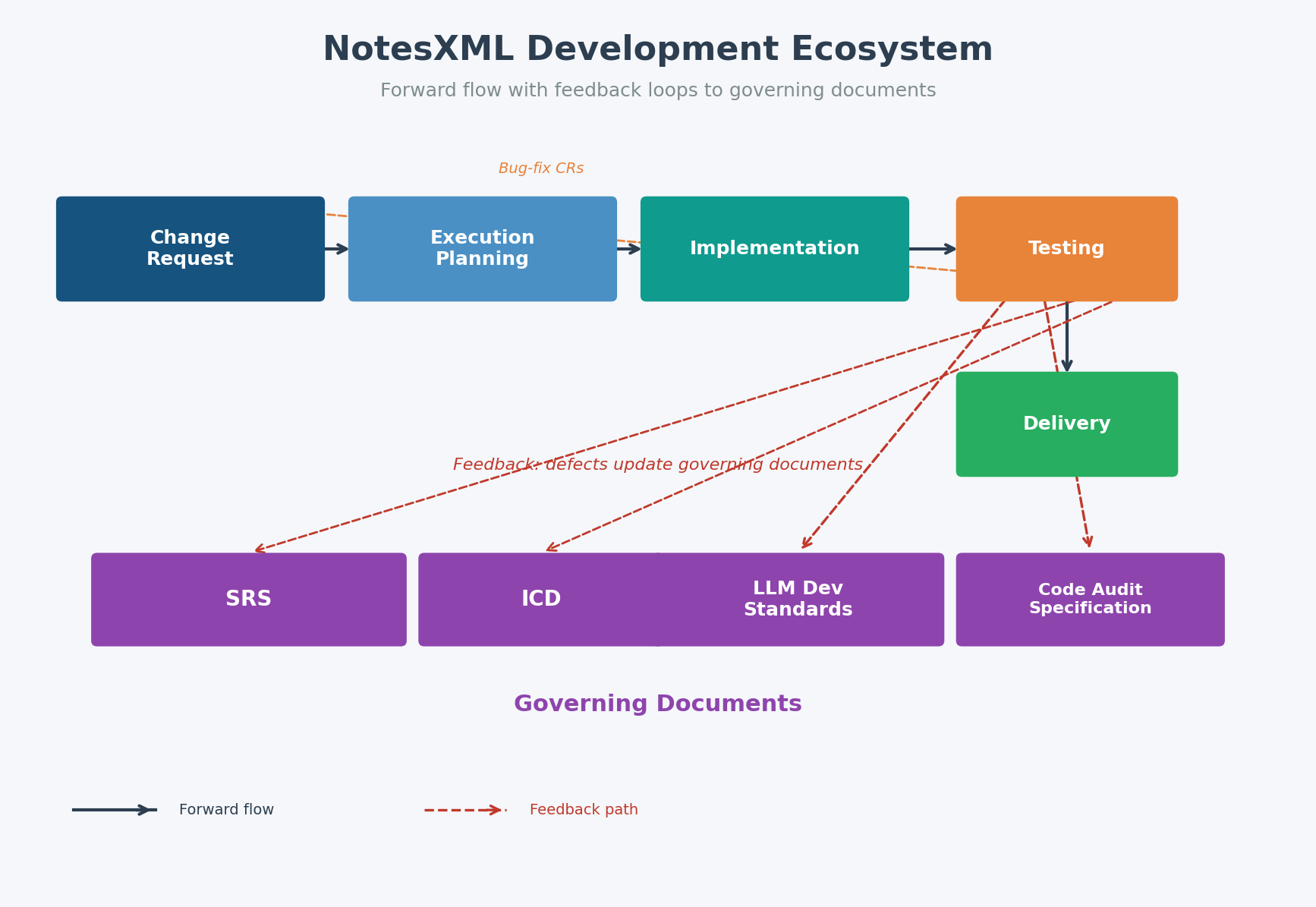

Figure 1 — Forward flow from Change Requests through delivery, with feedback loops updating governing documents.

As shown in Figure 1, the forward flow moves from Change Request creation through execution planning, implementation, testing, and delivery. But the feedback paths — shown as dashed lines — are equally important. Defects discovered during testing generate not only bug-fix Change Requests but also updates to the LLM Development Standards and the Code Audit Specification. This means the process gets stricter and more capable with every incident it encounters.

3. The Governing Document System

NotesXML maintains a hierarchy of living specifications that govern all development activity. These are not static requirements documents written at the start of a project and forgotten. They are versioned, synchronized, and actively updated as the project evolves.

3.1 Software Requirements Specification (SRS)

The SRS contains all functional and non-functional requirements organized by NX-prefixed requirement IDs. Every feature Change Request must reference the SRS requirement(s) it implements. The SRS itself is updated when new requirements are identified during development, when compliance audits reveal gaps between documented requirements and implemented behavior, or when commercial distribution needs drive new feature areas. The SRS spans over 40 major sections covering everything from core note types and the sort system to commercial licensing, calendar functionality, and cross-platform synchronization.

3.2 Interface Control Document (ICD)

The ICD documents every cross-component interface in the system. This includes the JavaScript-to-Kotlin bridge methods for Android, the Electron IPC handlers for desktop, the callback interfaces, and the data flow between subsystems. When any code change adds, removes, or modifies a method signature, the ICD must be updated as part of the same Change Request. The ICD also contains the Interface Dependency Map — a visual representation of the application bootstrap sequence showing the order in which components initialize and the dependencies between them.

3.3 LLM Development Standards

This is the most distinctive document in the system. The LLM Development Standards contain over 40 rules that govern how the AI assistant works on this project. These rules were not written in advance — they were distilled from real incidents. Each rule includes the incident that motivated it, a case study showing the failure pattern, and concrete NEVER/ALWAYS behavioral requirements. The version history reads like a post-mortem log: one version added proportional debugging effort after an over-analysis incident; another added defect ownership rules after the AI assistant attributed a post-delivery failure to the build environment rather than examining its own code first; another added build pipeline traceability after a missing source file went undetected until a platform build failed; still another added workaround preservation rules after a component migration dropped a critical safety measure that had to be rediscovered from scratch.

The LLM Development Standards serve as the AI assistant's operational manual. They include mandatory pre-code checklists, rejection criteria that prevent delivery of non-compliant work, and a correction protocol that governs how errors in AI analysis are acknowledged and remediated.

3.4 Code Audit Specification

The Code Audit LLM Specification defines a formal audit process with 17 critical quality checks that evaluate code quality, interface consistency, type compliance, cross-platform parity, method resolution, and reachability validation. Each check has defined scoring criteria, and the results roll up into a weighted Code Health Score. New checks are added when recurring bug patterns are identified — for example, after one audit discovered IPC return value unwrapping bugs, a dedicated critical check was created for that pattern. After another audit found functions that appeared cross-platform but silently failed on specific platforms due to missing code paths, a Platform Completeness check was added. A separate addendum (the Environment Parity Check Specification) defines a regression gate that activates after the platform bridge migration is complete, ensuring no new code bypasses the unified bridge interface.

3.5 Documentation Management Plan

The Documentation Management Plan classifies all project documents into three tiers (L1 Critical, L2 Supporting, L3 Reference) and defines mandatory synchronization triggers. When code changes occur — such as adding a new JavaScript interface method or changing a build configuration — the plan specifies exactly which documents must be updated. This prevents specification drift, where the code evolves but the documentation stays frozen at an earlier state.

3.6 Codebase Cross-Reference

The Codebase Cross-Reference is a living inventory of the entire modular codebase: every source module with its load order and assembly position, every major API surface with its owning module and callers, every bridge method with its cross-platform equivalents, and the complete build system file map. It serves as the AI assistant's starting point for any investigation — rather than spending time searching across dozens of files to locate a function or trace a bridge method, the cross-reference provides an authoritative lookup table. Before editing any module, the AI assistant is required to consult the cross-reference to verify the file is actually loaded at runtime and to confirm its ownership of the relevant interfaces. It is updated as part of every Change Request that adds, removes, or renames modules, bridge methods, or build paths.

4. The Change Request Lifecycle

Every functional code change in NotesXML — every bug fix, every new feature, every cross-platform correction — is governed by a formal Change Request. The CR system is not bureaucratic overhead; it is the mechanism that connects code changes to requirements, testing, versioning, and documentation updates in a single traceable unit.

A Change Request progresses through six states: Draft, Review, Approved, In Progress, Completed, and Rejected. Implementation cannot begin until the CR reaches Approved status. Each CR must include an executive summary, evidence-based root cause analysis, a proposed solution with file-level implementation details, a complete list of files modified, testing requirements specifying platforms and expected results, and a version impact assessment.

Figure 2 — Change Request Lifecycle with mandatory documentation synchronization triggers.

The bottom half of Figure 2 shows the documentation synchronization triggers: every implementation triggers mandatory updates to related specifications. A new JavaScript interface method requires ICD and potentially SRS updates. A build configuration change requires a Build System Specification update. An NXL file format change requires an NXL Format Specification update. These synchronization requirements are not optional — they are enforced by the Documentation Management Plan and verified during review.

The CR numbering format (CR-YYYY-NNN) provides a permanent audit trail. The project has processed hundreds of Change Requests, each one documented with implementation details, testing results, and version impact. This history is itself a process output — a complete record of every change made to the system and why.

5. AI-Assisted Development: Governed Autonomy

The AI development assistant in this process is not a code-completion tool. It is a full participant in the development lifecycle — implementing Change Requests, producing documentation, performing code audits, and debugging cross-platform issues. But it operates under strict governance.

5.1 The Rule System

The LLM Development Standards define mandatory behaviors across every phase of work. Rules cover version control discipline (every code change must increment the version), defect ownership (the AI must assume its own code is at fault first and trace its own code before questioning the human's build process), API documentation synchronization (every interface change must update the ICD), modular architecture enforcement, and build pipeline traceability. Each rule was created because its absence caused a real problem.

5.2 The Correction Protocol

When the AI makes an error in analysis, diagnosis, or implementation, a formal correction protocol activates. The AI must immediately acknowledge the error, provide corrected analysis with evidence, explain the methodology failure, propose prevention measures, and update audit specifications if the error reveals a systemic issue. This protocol was created after an early audit produced inaccurate findings due to incomplete file scanning — a failure that was caught during review and led to the creation of mandatory verification checklists.

6. The Code Audit Feedback Loop

The Code Audit is not a one-time quality check — it is a recurring process with its own improvement cycle. The audit specification defines 17 critical quality checks, each designed to catch a specific class of cross-platform defect. But the specification itself evolves based on what the audits discover.

Figure 3 — Findings flow to code fixes, new audit checks, and updated development standards.

Figure 3 illustrates the three feedback paths from audit findings. The most obvious path generates new Change Requests to fix discovered defects. But the more powerful paths update the Code Audit Specification itself (adding new checks to catch similar defects in the future) and update the LLM Development Standards (adding new rules to prevent the AI from introducing similar defects in the first place).

This creates a ratchet effect: the quality bar can only go up. Each audit cycle catches bugs that the previous specification couldn't detect, and the updated specification ensures those patterns are caught automatically in future audits. A concrete example: one audit discovered multiple call sites where Electron IPC return values were passed directly to JSON.parse without unwrapping, causing silent failures. This single finding created three outputs — Change Requests to fix the affected call sites, a new Critical Check in the Code Audit Specification for that pattern, and updates to the LLM Development Standards to prevent the AI from introducing the same defect in future implementations.

The most recent additions to the audit specification include platform code path completeness verification, handler parameter utilization and exit path analysis, hardcoded constant validity checking, method resolution tracing, reachability validation, and near-miss reference detection — all driven by real bugs found in real audits.

7. The Build Pipeline

NotesXML follows a modular source architecture. The application is developed as individual JavaScript modules, CSS modules, and Kotlin bridge files, which are assembled into distributable packages for four target platforms: Web browser, Linux AppImage, Windows (Electron), and Android APK.

Figure 4 — Modular source to four distribution platforms and three commercial channels.

The assembly process is managed by a custom build system that reads module markers from the application source and injects the corresponding module contents to produce an assembled application bundle. This bundle is the core artifact that is then packaged for each platform — used directly for web distribution, wrapped in Electron for desktop, and embedded in a WebView for Android.

A build orchestrator coordinates the full pipeline, including automatic discovery of source files. This was a deliberate design choice made after an incident where a new source file was manually created but not registered with the build system, causing a platform build to fail. Automatic discovery eliminated that class of error entirely — the build system finds what exists rather than relying on a manually-maintained list that can silently fall out of sync.

The pipeline feeds three distribution channels: the IWV Digital Solutions company website (offering a direct-purchase option), the Google Play Store for Android, and the Microsoft Store for Windows. Each channel has its own compliance requirements — privacy policies, data safety forms, developer identity verification, and store listing consistency — all documented in a comprehensive Website Requirements Specification.

8. The Closed-Loop Continuous Improvement System

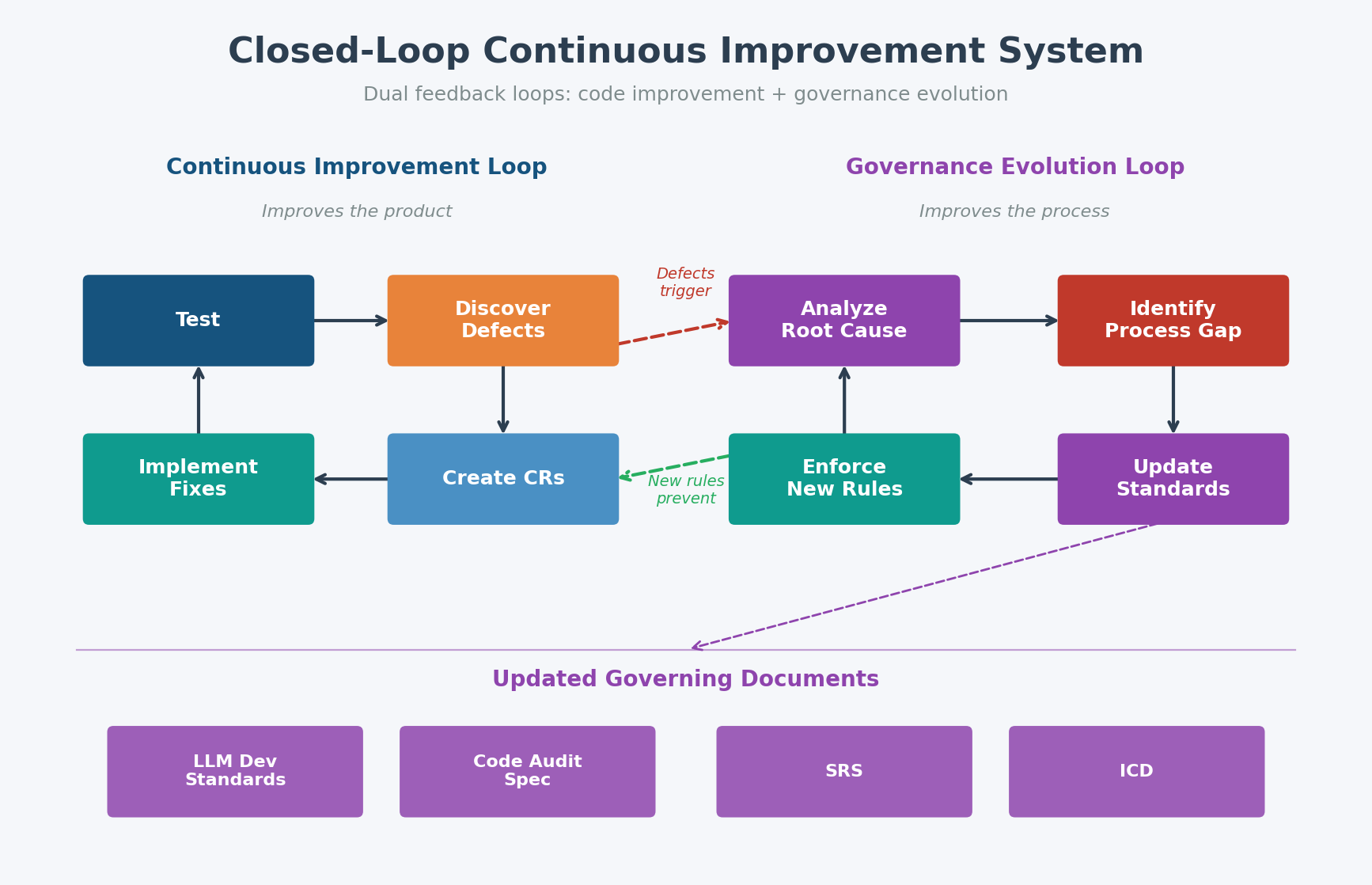

The full picture emerges when all the feedback loops are viewed together. The NotesXML development process is a closed-loop system with two distinct improvement cycles: a Continuous Improvement Loop that feeds code fixes back into implementation, and a Governance Evolution Loop that feeds process insights back into the standards and specifications that govern future development.

Figure 5 — Dual feedback loops: code improvement (left) and governance evolution (right).

The Continuous Improvement Loop (shown on the left of Figure 5) is the traditional software development cycle: test, discover defects, create Change Requests, implement fixes, test again. This loop improves the product.

The Governance Evolution Loop (shown on the right) is what makes this process distinctive. Discovered issues don't just fix code — they update the LLM Development Standards with new rules and incident case studies, update the Code Audit Specification with new critical checks and scoring criteria, update the SRS with refined or new requirements, and update the ICD with corrected interface contracts. This loop improves the process itself.

The two loops interact: the governance evolution loop makes the continuous improvement loop more effective over time. An AI assistant governed by later versions of the LLM Standards makes fewer errors than one governed by earlier versions, because the later version encodes the lessons of dozens of real incidents. A code audit using a later specification catches more defects than an earlier one, because the later version includes checks that were added in response to bugs that the earlier version missed.

9. Process Outputs

The outputs of this development process fall into three categories: code artifacts, documentation artifacts, and governance artifacts.

9.1 Code Artifacts

The code artifacts include JavaScript modules (organized into functional categories), CSS style modules, Kotlin bridge files for Android, Electron main process and preload scripts, build tools, and the assembled distribution packages for all four target platforms. Each code module carries a standardized header with version number, CR reference, and module description.

9.2 Documentation Artifacts

The documentation artifacts include the SRS (covering 40+ sections of requirements), the ICD (documenting every cross-component interface and the bootstrap dependency map), the NXL Format Specification (defining the portable file format), the Codebase Cross-Reference (the authoritative module and API registry), the Change Request History (hundreds of CRs with full implementation records), code audit reports with scored findings, and the Website Requirements Specification for commercial distribution compliance.

9.3 Governance Artifacts

The governance artifacts are the process outputs that other processes rarely produce: the LLM Development Standards (with over 40 rules derived from real incidents), the Code Audit Specification (with 17 weighted critical quality checks and scoring), the Environment Parity Check Specification (defining post-migration regression gates), the Documentation Management Plan (defining synchronization requirements), and the Unified Platform Architecture SCR (defining the bridge pattern that eliminates platform-specific code scatter). These artifacts don't just document how the process works — they actively govern it.

10. Conclusion

Software development processes are typically described in terms of methodologies — Agile, Waterfall, DevOps. The NotesXML development process borrows from all of these, but its defining characteristic is something different: it is a process that systematically learns from its own failures and encodes those lessons into enforceable governance.

When a defect is discovered, the response is not limited to fixing the code. The response asks: Why did this defect get through? What rule was missing that would have prevented it? What audit check would have caught it? What documentation was out of sync? The answers to these questions produce updates to the LLM Development Standards, the Code Audit Specification, and the project's governing documents — creating a steadily tightening quality envelope.

The result is not a perfect process — no process is. But it is a process that gets measurably better with every iteration, that maintains a complete audit trail of its own evolution, and that applies the same standard of rigor to AI-generated code that it applies to human-written code. In an era when AI can write code faster than ever, the IWV Standard ensures that speed serves quality rather than replacing it.

© 2026 IWV Digital Solutions LLC. All rights reserved.

DMCA Registration: DMCA-1070277

© 2026 IWV Digital Solutions LLC. All rights reserved.

← Back to Articles